How to Write Data Analysis Reports in 9 Easy Steps

Table of contents

Imagine a bunch of bricks. They don’t have a purpose until you put them together into a house, do they?

In business intelligence, data is your building material, and a quality data analysis report is what you want to see as the result.

But if you’ve ever tried to use the collected data and assemble it into an insightful report, you know it’s not an easy job to do. Data is supposed to tell a story about your performance, but there’s a long way from unprocessed, raw data to a meaningful narrative that you can use to create an actionable plan for making steady progress towards your goals.

This article will help you improve the quality of your data analysis reports and build them effortlessly and fast. Let’s jump right in.

What Is a Data Analysis Report?

Why is data analysis reporting important, how to write a data analysis report 9 simple steps, data analysis report examples.

A data analysis report is a type of business report in which you present quantitative and qualitative data to evaluate your strategies and performance. Based on this data, you give recommendations for further steps and business decisions while using the data as evidence that backs up your evaluation.

Today, data analysis is one of the most important elements of business intelligence strategies as companies have realized the potential of having data-driven insights at hand to help them make data-driven decisions.

Just like you’ll look at your car’s dashboard if something’s wrong, you’ll pull your data to see what’s causing drops in website traffic, conversions, or sales – or any other business metric you may be following. This unprocessed data still doesn’t give you a diagnosis – it’s the first step towards a quality analysis. Once you’ve extracted and organized your data, it’s important to use graphs and charts to visualize it and make it easier to draw conclusions.

Once you add meaning to your data and create suggestions based on it, you have a data analysis report.

A vital detail everyone should know about data analysis reports is their accessibility for everyone in your team, and the ability to innovate. Your analysis report will contain your vital KPIs, so you can see where you’re reaching your targets and achieving goals, and where you need to speed up your activities or optimize your strategy. If you can uncover trends or patterns in your data, you can use it to innovate and stand out by offering even more valuable content, services, or products to your audience.

Data analysis is vital for companies for several reasons.

A reliable source of information

Trusting your intuition is fine, but relying on data is safer. When you can base your action plan on data that clearly shows that something is working or failing, you won’t only justify your decisions in front of the management, clients, or investors, but you’ll also be sure that you’ve taken appropriate steps to fix an issue or seize an important opportunity.

A better understanding of your business

According to Databox’s State of Business Reporting , most companies stated that regular monitoring and reporting improved progress monitoring, increased team effectiveness, allowed them to identify trends more easily, and improved financial performance. Data analysis makes it easier to understand your business as a whole, and each aspect individually. You can see how different departments analyze their workflow and how each step impacts their results in the end, by following their KPIs over time. Then, you can easily conclude what your business needs to grow – to boost your sales strategy, optimize your finances, or up your SEO game, for example.

An additional way to understand your business better is to compare your most important metrics and KPIs against companies that are just like yours. With Databox Benchmarks , you will need only one spot to see how all of your teams stack up against your peers and competitors.

Instantly and Anonymously Benchmark Your Company’s Performance Against Others Just Like You

If you ever asked yourself:

- How does our marketing stack up against our competitors?

- Are our salespeople as productive as reps from similar companies?

- Are our profit margins as high as our peers?

Databox Benchmark Groups can finally help you answer these questions and discover how your company measures up against similar companies based on your KPIs.

When you join Benchmark Groups, you will:

- Get instant, up-to-date data on how your company stacks up against similar companies based on the metrics most important to you. Explore benchmarks for dozens of metrics, built on anonymized data from thousands of companies and get a full 360° view of your company’s KPIs across sales, marketing, finance, and more.

- Understand where your business excels and where you may be falling behind so you can shift to what will make the biggest impact. Leverage industry insights to set more effective, competitive business strategies. Explore where exactly you have room for growth within your business based on objective market data.

- Keep your clients happy by using data to back up your expertise. Show your clients where you’re helping them overperform against similar companies. Use the data to show prospects where they really are… and the potential of where they could be.

- Get a valuable asset for improving yearly and quarterly planning . Get valuable insights into areas that need more work. Gain more context for strategic planning.

The best part?

- Benchmark Groups are free to access.

- The data is 100% anonymized. No other company will be able to see your performance, and you won’t be able to see the performance of individual companies either.

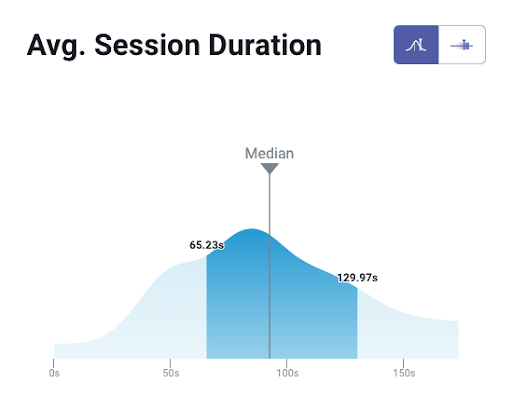

When it comes to showing you how your performance compares to others, here is what it might look like for the metric Average Session Duration:

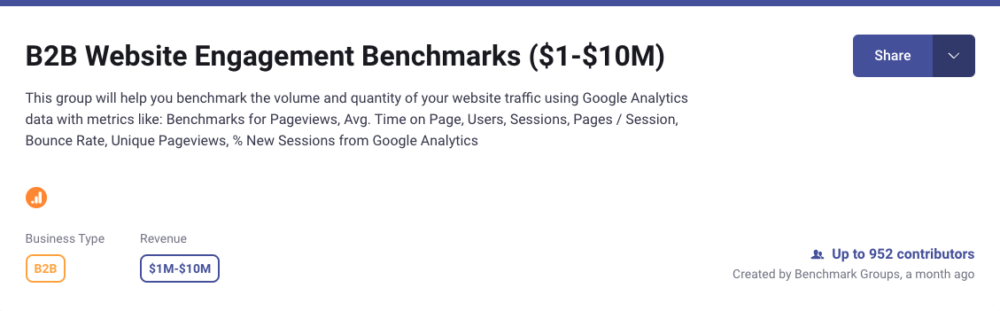

And here is an example of an open group you could join:

And this is just a fraction of what you’ll get. With Databox Benchmarks, you will need only one spot to see how all of your teams stack up — marketing, sales, customer service, product development, finance, and more.

- Choose criteria so that the Benchmark is calculated using only companies like yours

- Narrow the benchmark sample using criteria that describe your company

- Display benchmarks right on your Databox dashboards

Sounds like something you want to try out? Join a Databox Benchmark Group today!

It makes data accessible to everyone

Data doesn’t represent a magical creature reserved for data scientists only anymore. Now that you have streamlined and easy-to-follow data visualizations and tools that automatically show the latest figures, you can include everyone in the decision-making process as they’ll understand what means what in the charts and tables. The data may be complex, but it becomes easy to read when combined with proper illustrations. And when your teams gain such useful and accessible insight, they will feel motivated to act on it immediately.

Better collaboration

Data analysis reports help teams collaborate better, as well. You can apply the SMART technique to your KPIs and goals, because your KPIs become assignable. When they’re easy to interpret for your whole team, you can assign each person with one or multiple KPIs that they’ll be in charge of. That means taking a lot off a team leader’s plate so they can focus more on making other improvements in the business. At the same time, removing inaccurate data from your day-to-day operations will improve friction between different departments, like marketing and sales, for instance.

More productivity

You can also expect increased productivity, since you’ll be saving time you’d otherwise spend on waiting for specialists to translate data for other departments, etc. This means your internal procedures will also be on a top level.

Want to give value with your data analysis report? It’s critical to master the skill of writing a quality data analytics report. Want to know how to report on data efficiently? We’ll share our secret in the following section.

- Start with an Outline

- Make a Selection of Vital KPIs

- Pick the Right Charts for Appealing Design

- Use a Narrative

- Organize the Information

- Include a Summary

- Careful with Your Recommendations

- Double-Check Everything

- Use Interactive Dashboards

1. Start with an Outline

If you start writing without having a clear idea of what your data analysis report is going to include, it may get messy. Important insights may slip through your fingers, and you may stray away too far from the main topic. To avoid this, start the report by writing an outline first. Plan the structure and contents of each section first to make sure you’ve covered everything, and only then start crafting the report.

2. Make a Selection of Vital KPIs

Don’t overwhelm the audience by including every single metric there is. You can discuss your whole dashboard in a meeting with your team, but if you’re creating data analytics reports or marketing reports for other departments or the executives, it’s best to focus on the most relevant KPIs that demonstrate the data important for the overall business performance.

PRO TIP: How Well Are Your Marketing KPIs Performing?

Like most marketers and marketing managers, you want to know how well your efforts are translating into results each month. How much traffic and new contact conversions do you get? How many new contacts do you get from organic sessions? How are your email campaigns performing? How well are your landing pages converting? You might have to scramble to put all of this together in a single report, but now you can have it all at your fingertips in a single Databox dashboard.

Our Marketing Overview Dashboard includes data from Google Analytics 4 and HubSpot Marketing with key performance metrics like:

- Sessions . The number of sessions can tell you how many times people are returning to your website. Obviously, the higher the better.

- New Contacts from Sessions . How well is your campaign driving new contacts and customers?

- Marketing Performance KPIs . Tracking the number of MQLs, SQLs, New Contacts and similar will help you identify how your marketing efforts contribute to sales.

- Email Performance . Measure the success of your email campaigns from HubSpot. Keep an eye on your most important email marketing metrics such as number of sent emails, number of opened emails, open rate, email click-through rate, and more.

- Blog Posts and Landing Pages . How many people have viewed your blog recently? How well are your landing pages performing?

Now you can benefit from the experience of our Google Analytics and HubSpot Marketing experts, who have put together a plug-and-play Databox template that contains all the essential metrics for monitoring your leads. It’s simple to implement and start using as a standalone dashboard or in marketing reports, and best of all, it’s free!

You can easily set it up in just a few clicks – no coding required.

To set up the dashboard, follow these 3 simple steps:

Step 1: Get the template

Step 2: Connect your HubSpot and Google Analytics 4 accounts with Databox.

Step 3: Watch your dashboard populate in seconds.

3. Pick the Right Charts for Appealing Design

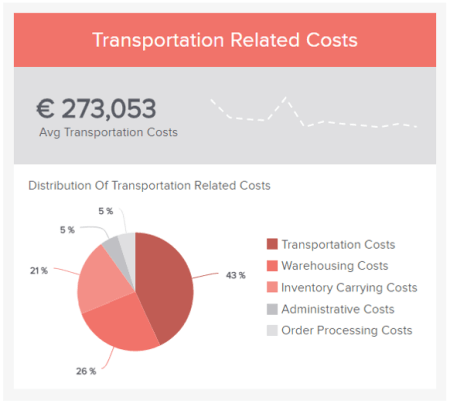

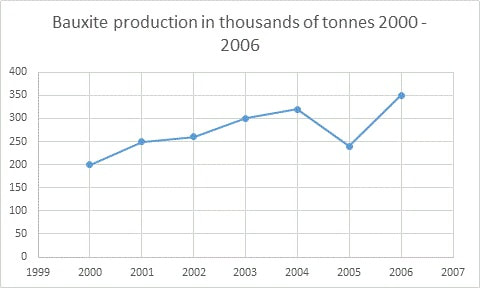

If you’re showing historical data – for instance, how you’ve performed now compared to last month – it’s best to use timelines or graphs. For other data, pie charts or tables may be more suitable. Make sure you use the right data visualization to display your data accurately and in an easy-to-understand manner.

4. Use a Narrative

Do you work on analytics and reporting ? Just exporting your data into a spreadsheet doesn’t qualify as either of them. The fact that you’re dealing with data may sound too technical, but actually, your report should tell a story about your performance. What happened on a specific day? Did your organic traffic increase or suddenly drop? Why? And more. There are a lot of questions to answer and you can put all the responses together in a coherent, understandable narrative.

5. Organize the Information

Before you start writing or building your dashboard, choose how you’re going to organize your data. Are you going to talk about the most relevant and general ones first? It may be the best way to start the report – the best practices typically involve starting with more general information and then diving into details if necessary.

6. Include a Summary

Some people in your audience won’t have the time to read the whole report, but they’ll want to know about your findings. Besides, a summary at the beginning of your data analytics report will help the reader get familiar with the topic and the goal of the report. And a quick note: although the summary should be placed at the beginning, you usually write it when you’re done with the report. When you have the whole picture, it’s easier to extract the key points that you’ll include in the summary.

7. Careful with Your Recommendations

Your communication skills may be critical in data analytics reports. Know that some of the results probably won’t be satisfactory, which means that someone’s strategy failed. Make sure you’re objective in your recommendations and that you’re not looking for someone to blame. Don’t criticize, but give suggestions on how things can be improved. Being solution-oriented is much more important and helpful for the business.

8. Double-Check Everything

The whole point of using data analytics tools and data, in general, is to achieve as much accuracy as possible. Avoid manual mistakes by proofreading your report when you finish, and if possible, give it to another person so they can confirm everything’s in place.

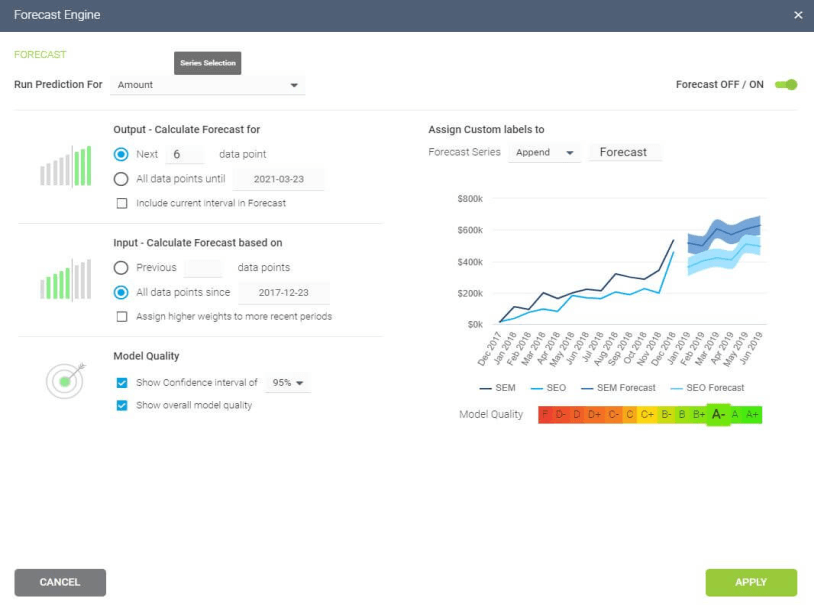

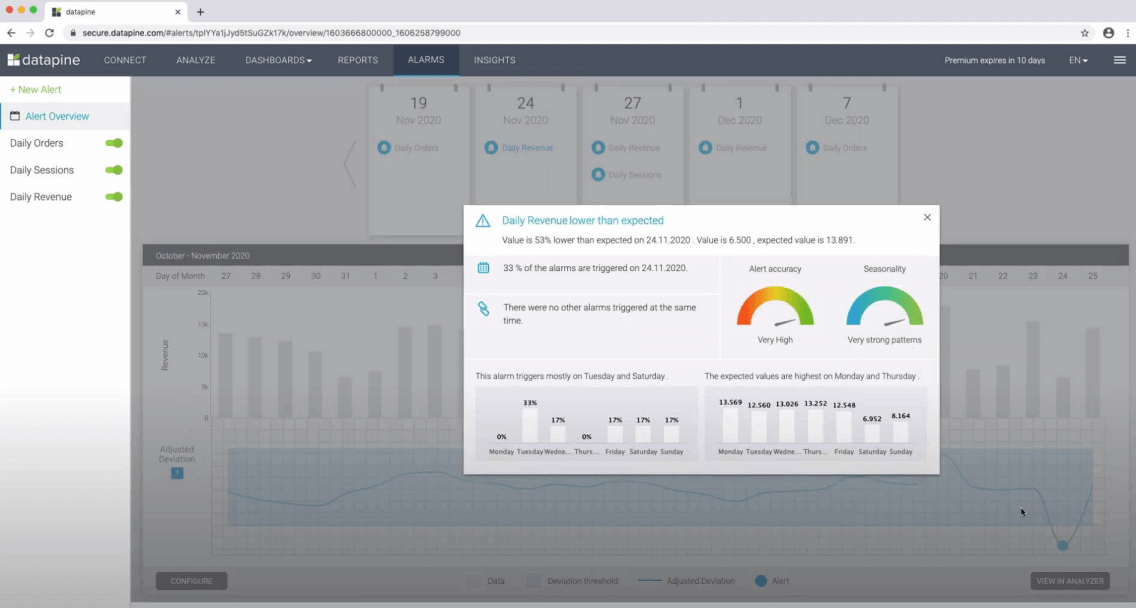

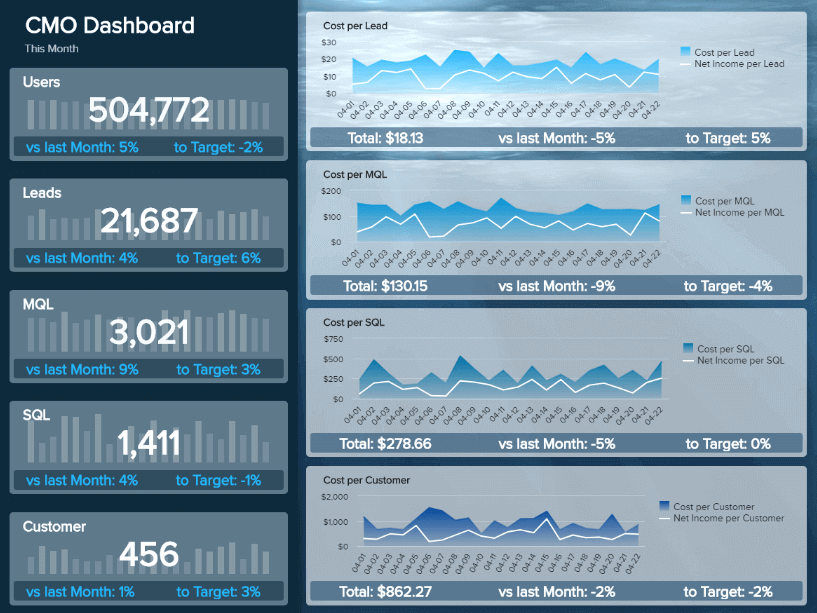

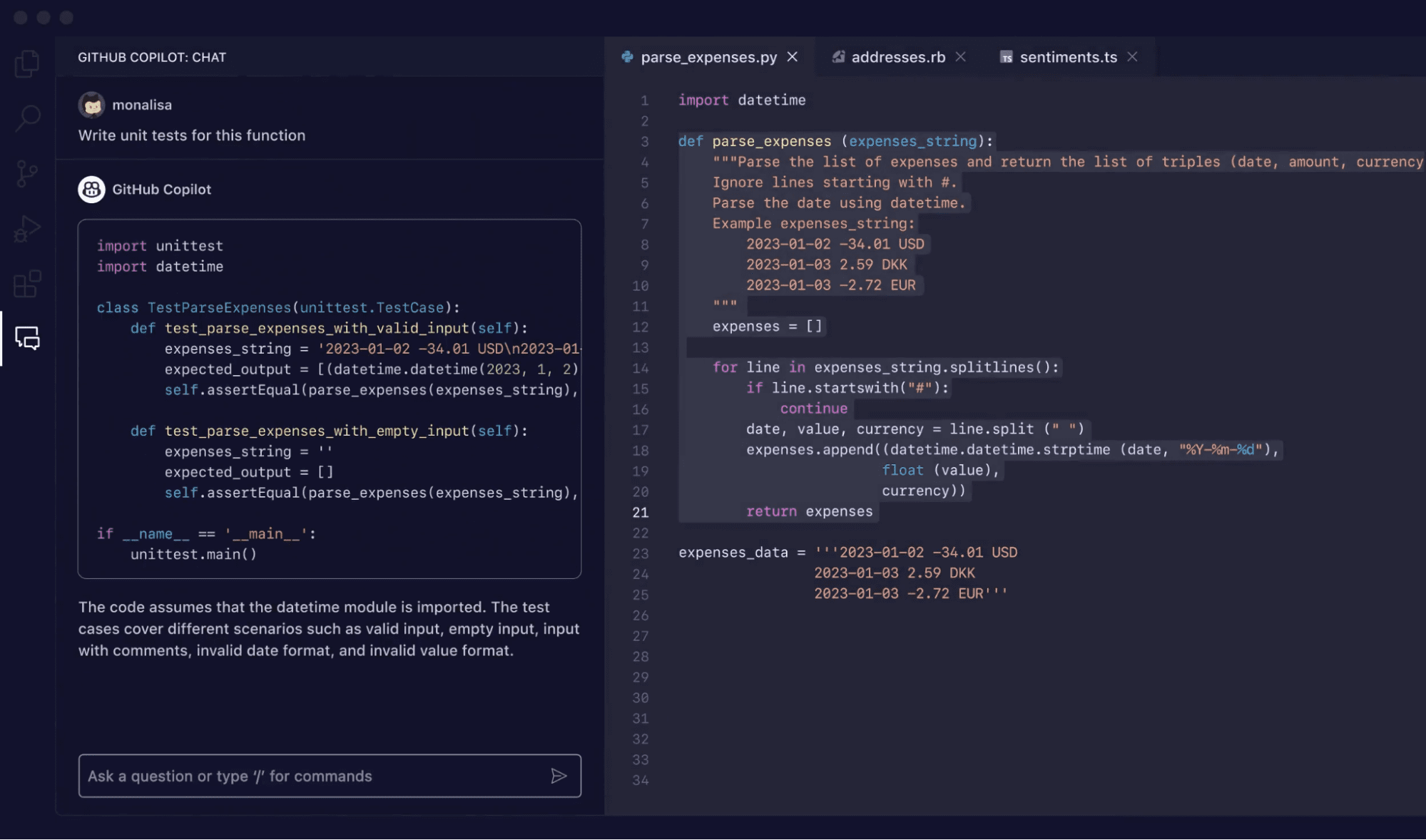

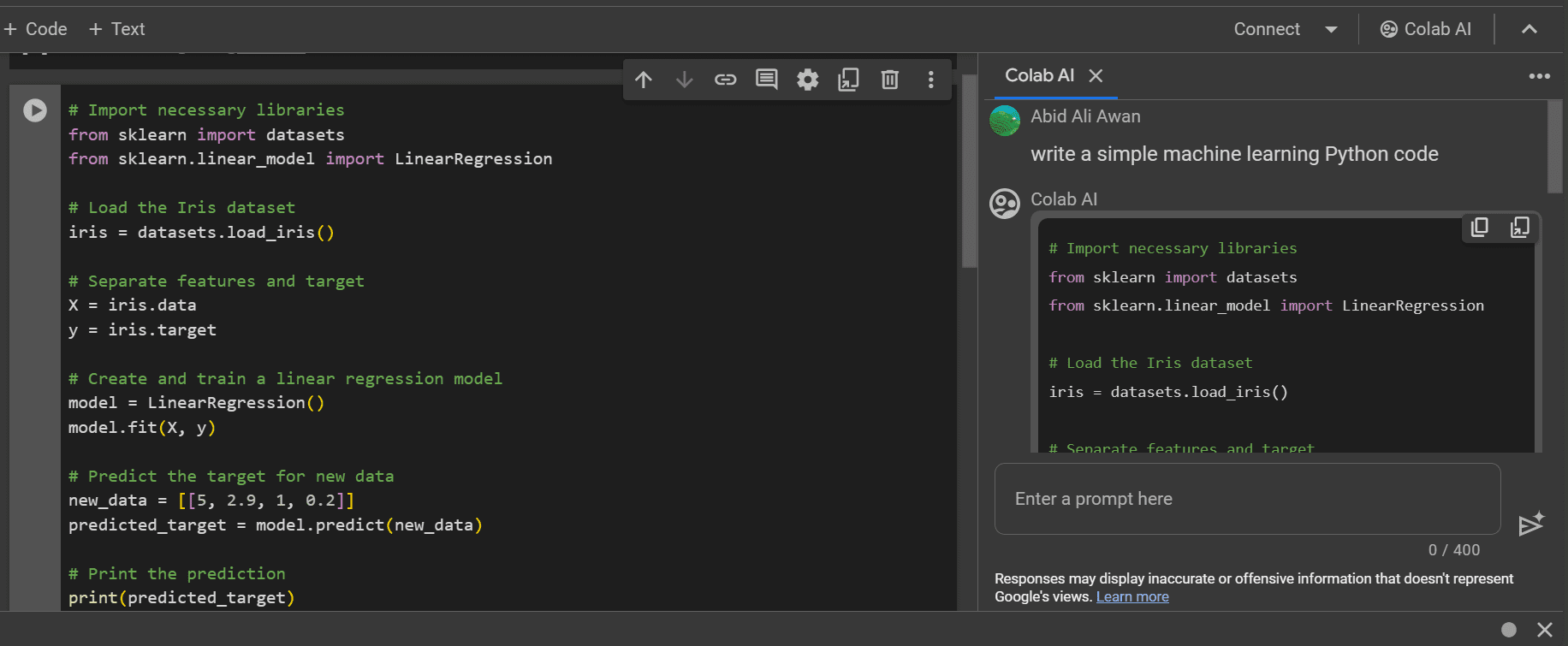

9. Use Interactive Dashboards

Using the right tools is just as important as the contents of your data analysis. The way you present it can make or break a good report, regardless of how valuable the data is. That said, choose a great reporting tool that can automatically update your data and display it in a visually appealing manner. Make sure it offers streamlined interactive dashboards that you can also customize depending on the purpose of the report.

To wrap up the guide, we decided to share nine excellent examples of what awesome data analysis reports can look like. You’ll learn what metrics you should include and how to organize them in logical sections to make your report beautiful and effective.

- Marketing Data Analysis Report Example

SEO Data Analysis Report Example

Sales data analysis report example.

- Customer Support Data Analysis Report Example

Help Desk Data Analysis Report Example

Ecommerce data analysis report example, project management data analysis report example, social media data analysis report example, financial kpi data analysis report example, marketing data report example.

If you need an intuitive dashboard that allows you to track your website performance effortlessly and monitor all the relevant metrics such as website sessions, pageviews, or CTA engagement, you’ll love this free HubSpot Marketing Website Overview dashboard template .

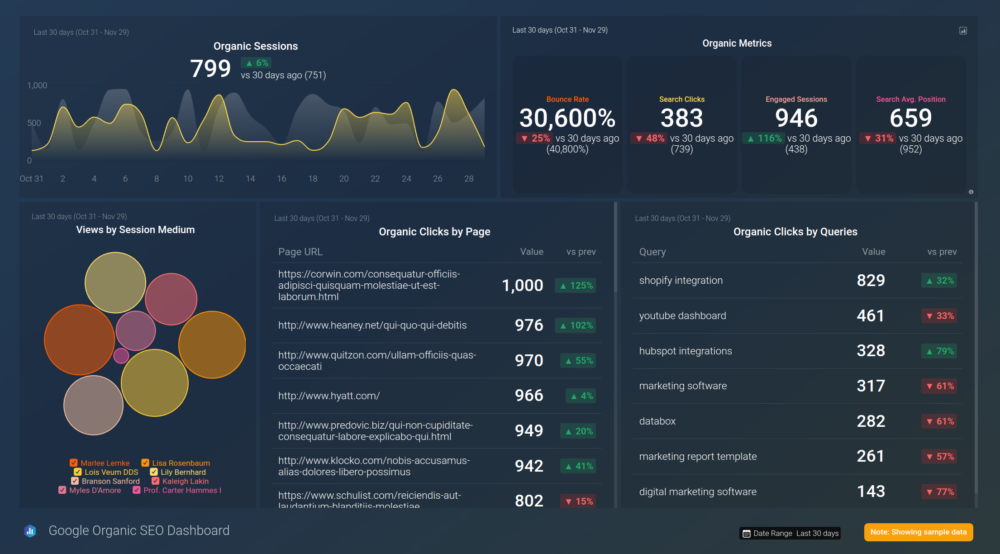

Tracking the performance of your SEO efforts is important. You can easily monitor relevant SEO KPIs like clicks by page, engaged sessions, or views by session medium by downloading this Google Organic SEO Dashboard .

How successful is your sales team? It’s easy to analyze their performance and predict future growth if you choose this HubSpot CRM Sales Analytics Overview dashboard template and track metrics such as average time to close the deal, new deals amount, or average revenue per new client.

Customer Support Analysis Data Report Example

Customer support is one of the essential factors that impact your business growth. You can use this streamlined, customizable Customer Success dashboard template . In a single dashboard, you can monitor metrics such as customer satisfaction score, new MRR, or time to first response time.

Other than being free and intuitive, this HelpScout for Customer Support dashboard template is also customizable and enables you to track the most vital metrics that indicate your customer support agents’ performance: handle time, happiness score, interactions per resolution, and more.

Is your online store improving or failing? You can easily collect relevant data about your store and monitor the most important metrics like total sales, orders placed, and new customers by downloading this WooCommerce Shop Overview dashboard template .

Does your IT department need feedback on their project management performance? Download this Jira dashboard template to track vital metrics such as issues created or resolved, issues by status, etc. Jira enables you to gain valuable insights into your teams’ productivity.

Need to know if your social media strategy is successful? You can find that out by using this easy-to-understand Social Media Awareness & Engagement dashboard template . Here you can monitor and analyze metrics like sessions by social source, track the number of likes and followers, and measure the traffic from each source.

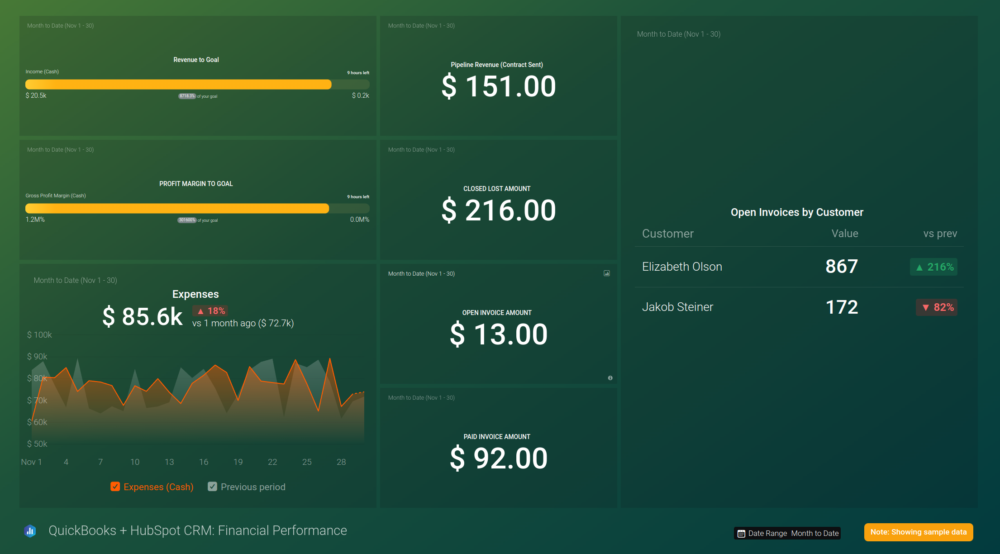

Tracking your finances is critical for keeping your business profitable. If you want to monitor metrics such as the number of open invoices, open deals amount by stage by pipeline, or closed-won deals, use this free QuickBooks + HubSpot CRM Financial Performance dashboard template .

Rely on Accurate Data with Databox

“I don’t have time to build custom reports from scratch.”

“It takes too long and becomes daunting very soon.”

“I’m not sure how to organize the data to make it effective and prove the value of my work.”

Does this sound like you?

Well, it’s something we all said at some point – creating data analytics reports can be time-consuming and tiring. And you’re still not sure if the report is compelling and understandable enough when you’re done.

That’s why we decided to create Databox dashboards – a world-class solution for saving your money and time. We build streamlined and easy-to-follow dashboards that include all the metrics that you may need and allow you to create custom ones if necessary. That way, you can use templates and adjust them to any new project or client without having to build a report from scratch.

You can skip the setup and get your first dashboard for free in just 24 hours, with our fantastic customer support team on the line to assist you with the metrics you should track and the structure you should use.

Enjoy crafting brilliant data analysis reports that will improve your business – it’s never been faster and more effortless. Sign up today and get your free dashboard in no time.

Get practical strategies that drive consistent growth

12 Tips for Developing a Successful Data Analytics Strategy

What Is Data Reporting and How to Create Data Reports for Your Business

What is kpi reporting kpi report examples, tips, and best practices.

Build your first dashboard in 5 minutes or less

Latest from our blog

- Playmaker Spotlight: Tory Ferrall, Director of Revenue Operations March 27, 2024

- New in Databox: Safeguard Your Data With Advanced Security Settings March 18, 2024

- Metrics & KPIs

- vs. Tableau

- vs. Looker Studio

- vs. Klipfolio

- vs. Power BI

- vs. Whatagraph

- vs. AgencyAnalytics

- Product & Engineering

- Inside Databox

- Terms of Service

- Privacy Policy

- Talent Resources

- We're Hiring!

- Help Center

- API Documentation

A Step-by-Step Guide to the Data Analysis Process

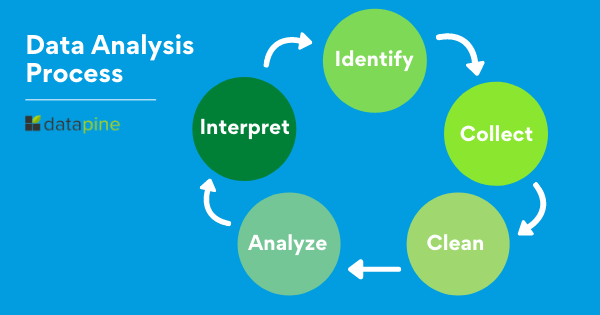

Like any scientific discipline, data analysis follows a rigorous step-by-step process. Each stage requires different skills and know-how. To get meaningful insights, though, it’s important to understand the process as a whole. An underlying framework is invaluable for producing results that stand up to scrutiny.

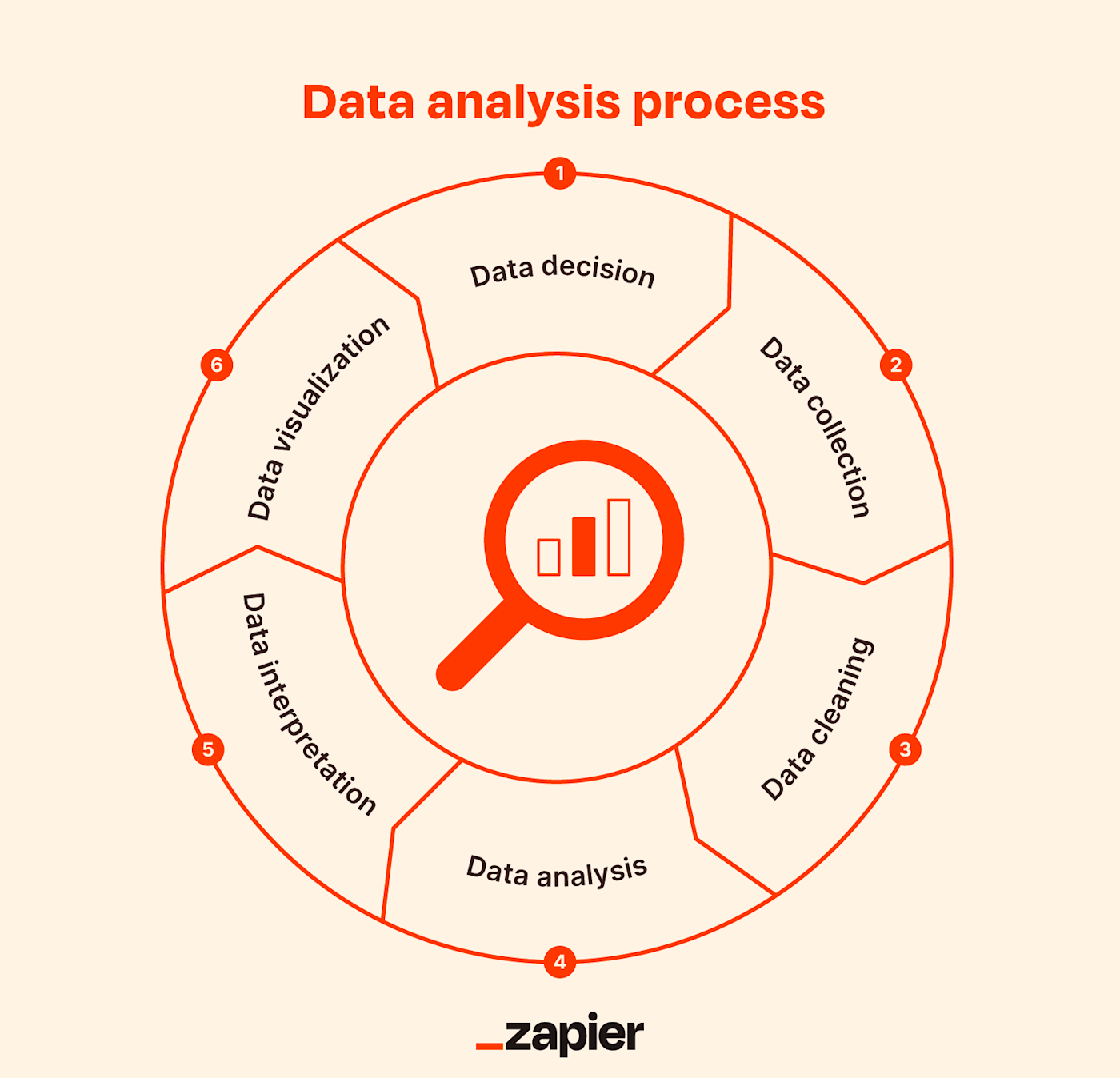

In this post, we’ll explore the main steps in the data analysis process. This will cover how to define your goal, collect data, and carry out an analysis. Where applicable, we’ll also use examples and highlight a few tools to make the journey easier. When you’re done, you’ll have a much better understanding of the basics. This will help you tweak the process to fit your own needs.

Here are the steps we’ll take you through:

- Defining the question

- Collecting the data

- Cleaning the data

- Analyzing the data

- Sharing your results

- Embracing failure

On popular request, we’ve also developed a video based on this article. Scroll further along this article to watch that.

Ready? Let’s get started with step one.

1. Step one: Defining the question

The first step in any data analysis process is to define your objective. In data analytics jargon, this is sometimes called the ‘problem statement’.

Defining your objective means coming up with a hypothesis and figuring how to test it. Start by asking: What business problem am I trying to solve? While this might sound straightforward, it can be trickier than it seems. For instance, your organization’s senior management might pose an issue, such as: “Why are we losing customers?” It’s possible, though, that this doesn’t get to the core of the problem. A data analyst’s job is to understand the business and its goals in enough depth that they can frame the problem the right way.

Let’s say you work for a fictional company called TopNotch Learning. TopNotch creates custom training software for its clients. While it is excellent at securing new clients, it has much lower repeat business. As such, your question might not be, “Why are we losing customers?” but, “Which factors are negatively impacting the customer experience?” or better yet: “How can we boost customer retention while minimizing costs?”

Now you’ve defined a problem, you need to determine which sources of data will best help you solve it. This is where your business acumen comes in again. For instance, perhaps you’ve noticed that the sales process for new clients is very slick, but that the production team is inefficient. Knowing this, you could hypothesize that the sales process wins lots of new clients, but the subsequent customer experience is lacking. Could this be why customers don’t come back? Which sources of data will help you answer this question?

Tools to help define your objective

Defining your objective is mostly about soft skills, business knowledge, and lateral thinking. But you’ll also need to keep track of business metrics and key performance indicators (KPIs). Monthly reports can allow you to track problem points in the business. Some KPI dashboards come with a fee, like Databox and DashThis . However, you’ll also find open-source software like Grafana , Freeboard , and Dashbuilder . These are great for producing simple dashboards, both at the beginning and the end of the data analysis process.

2. Step two: Collecting the data

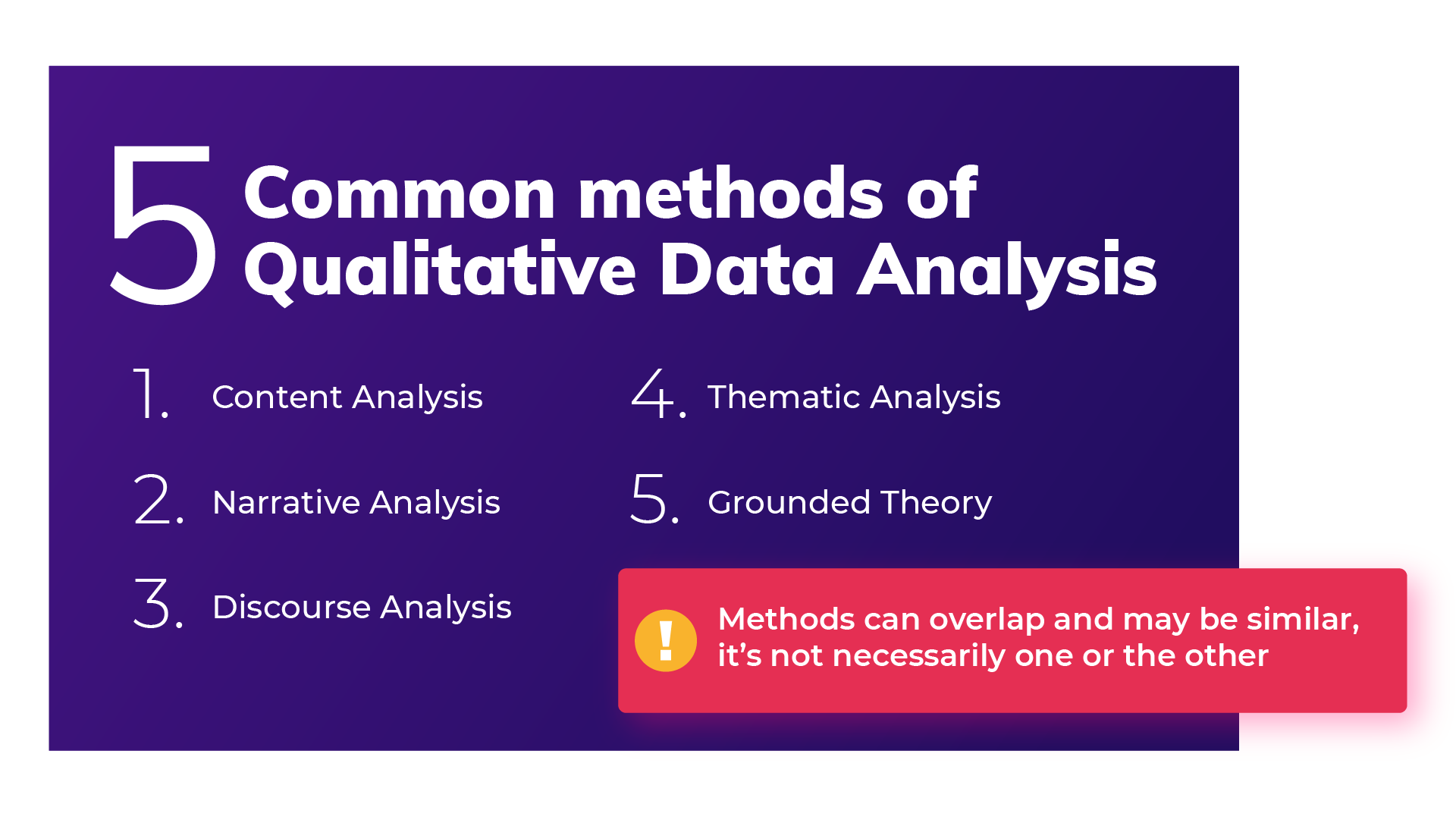

Once you’ve established your objective, you’ll need to create a strategy for collecting and aggregating the appropriate data. A key part of this is determining which data you need. This might be quantitative (numeric) data, e.g. sales figures, or qualitative (descriptive) data, such as customer reviews. All data fit into one of three categories: first-party, second-party, and third-party data. Let’s explore each one.

What is first-party data?

First-party data are data that you, or your company, have directly collected from customers. It might come in the form of transactional tracking data or information from your company’s customer relationship management (CRM) system. Whatever its source, first-party data is usually structured and organized in a clear, defined way. Other sources of first-party data might include customer satisfaction surveys, focus groups, interviews, or direct observation.

What is second-party data?

To enrich your analysis, you might want to secure a secondary data source. Second-party data is the first-party data of other organizations. This might be available directly from the company or through a private marketplace. The main benefit of second-party data is that they are usually structured, and although they will be less relevant than first-party data, they also tend to be quite reliable. Examples of second-party data include website, app or social media activity, like online purchase histories, or shipping data.

What is third-party data?

Third-party data is data that has been collected and aggregated from numerous sources by a third-party organization. Often (though not always) third-party data contains a vast amount of unstructured data points (big data). Many organizations collect big data to create industry reports or to conduct market research. The research and advisory firm Gartner is a good real-world example of an organization that collects big data and sells it on to other companies. Open data repositories and government portals are also sources of third-party data .

Tools to help you collect data

Once you’ve devised a data strategy (i.e. you’ve identified which data you need, and how best to go about collecting them) there are many tools you can use to help you. One thing you’ll need, regardless of industry or area of expertise, is a data management platform (DMP). A DMP is a piece of software that allows you to identify and aggregate data from numerous sources, before manipulating them, segmenting them, and so on. There are many DMPs available. Some well-known enterprise DMPs include Salesforce DMP , SAS , and the data integration platform, Xplenty . If you want to play around, you can also try some open-source platforms like Pimcore or D:Swarm .

Want to learn more about what data analytics is and the process a data analyst follows? We cover this topic (and more) in our free introductory short course for beginners. Check out tutorial one: An introduction to data analytics .

3. Step three: Cleaning the data

Once you’ve collected your data, the next step is to get it ready for analysis. This means cleaning, or ‘scrubbing’ it, and is crucial in making sure that you’re working with high-quality data . Key data cleaning tasks include:

- Removing major errors, duplicates, and outliers —all of which are inevitable problems when aggregating data from numerous sources.

- Removing unwanted data points —extracting irrelevant observations that have no bearing on your intended analysis.

- Bringing structure to your data —general ‘housekeeping’, i.e. fixing typos or layout issues, which will help you map and manipulate your data more easily.

- Filling in major gaps —as you’re tidying up, you might notice that important data are missing. Once you’ve identified gaps, you can go about filling them.

A good data analyst will spend around 70-90% of their time cleaning their data. This might sound excessive. But focusing on the wrong data points (or analyzing erroneous data) will severely impact your results. It might even send you back to square one…so don’t rush it! You’ll find a step-by-step guide to data cleaning here . You may be interested in this introductory tutorial to data cleaning, hosted by Dr. Humera Noor Minhas.

Carrying out an exploratory analysis

Another thing many data analysts do (alongside cleaning data) is to carry out an exploratory analysis. This helps identify initial trends and characteristics, and can even refine your hypothesis. Let’s use our fictional learning company as an example again. Carrying out an exploratory analysis, perhaps you notice a correlation between how much TopNotch Learning’s clients pay and how quickly they move on to new suppliers. This might suggest that a low-quality customer experience (the assumption in your initial hypothesis) is actually less of an issue than cost. You might, therefore, take this into account.

Tools to help you clean your data

Cleaning datasets manually—especially large ones—can be daunting. Luckily, there are many tools available to streamline the process. Open-source tools, such as OpenRefine , are excellent for basic data cleaning, as well as high-level exploration. However, free tools offer limited functionality for very large datasets. Python libraries (e.g. Pandas) and some R packages are better suited for heavy data scrubbing. You will, of course, need to be familiar with the languages. Alternatively, enterprise tools are also available. For example, Data Ladder , which is one of the highest-rated data-matching tools in the industry. There are many more. Why not see which free data cleaning tools you can find to play around with?

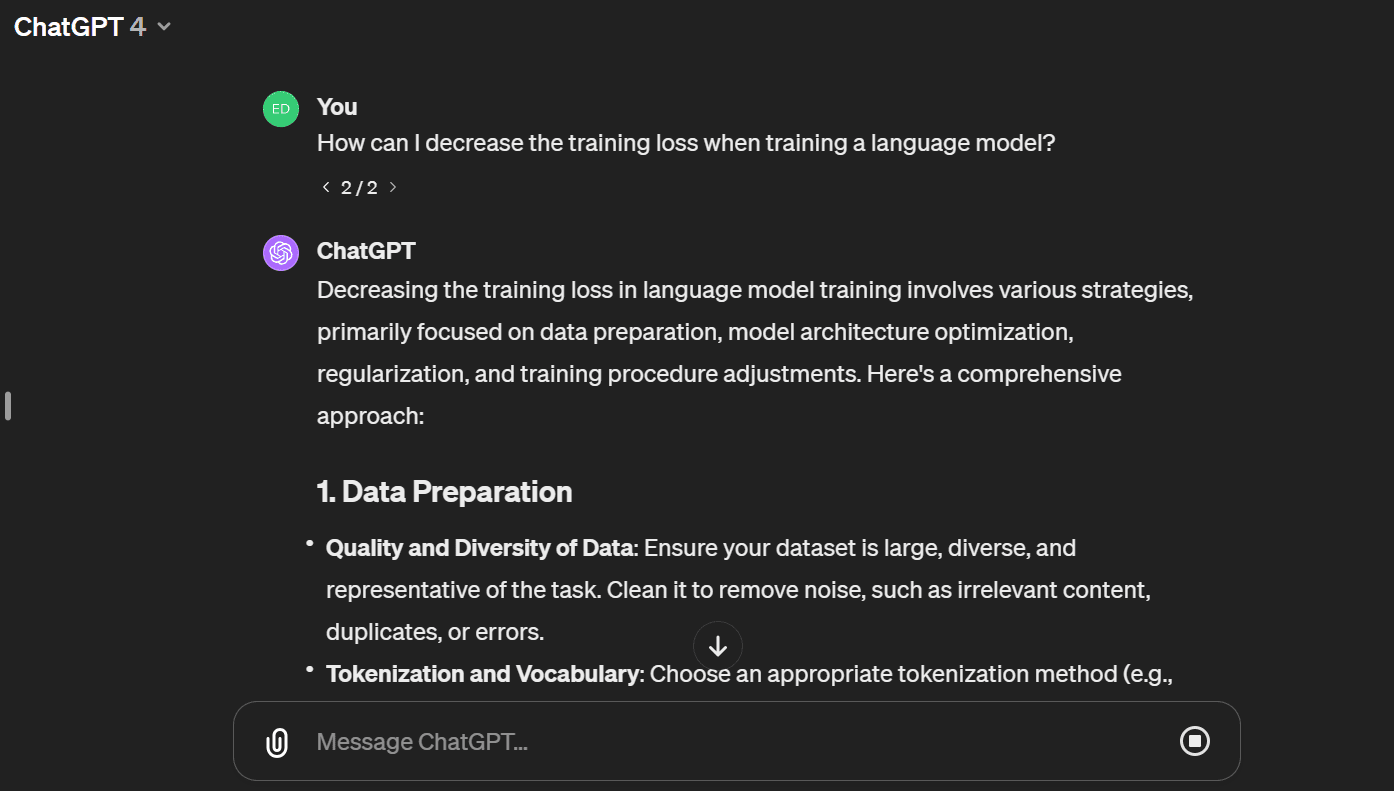

4. Step four: Analyzing the data

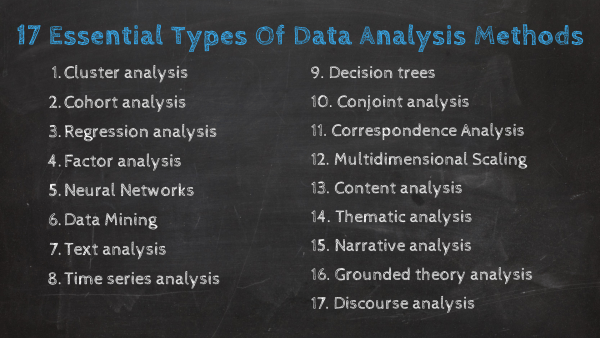

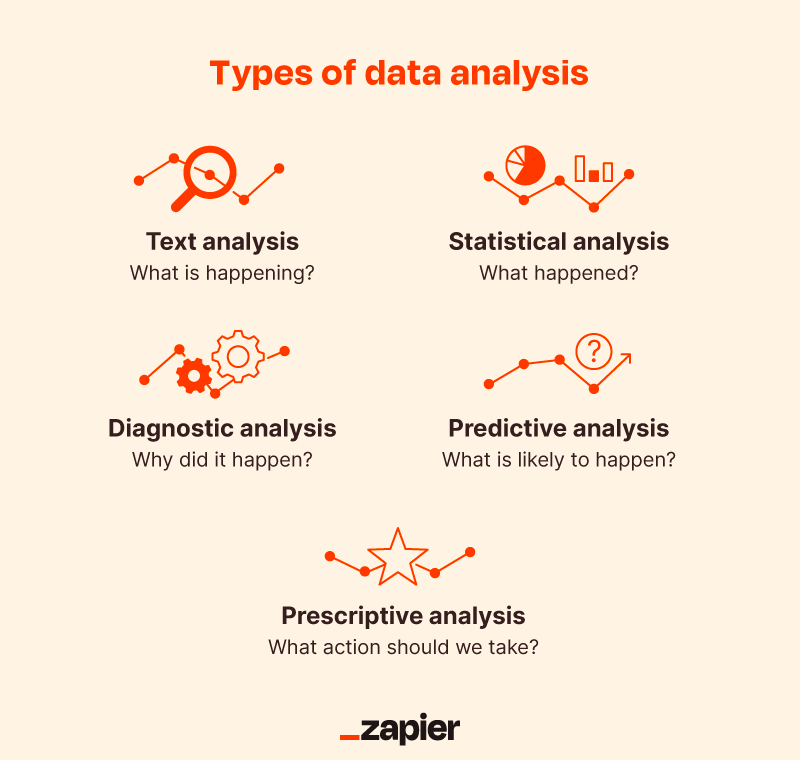

Finally, you’ve cleaned your data. Now comes the fun bit—analyzing it! The type of data analysis you carry out largely depends on what your goal is. But there are many techniques available. Univariate or bivariate analysis, time-series analysis, and regression analysis are just a few you might have heard of. More important than the different types, though, is how you apply them. This depends on what insights you’re hoping to gain. Broadly speaking, all types of data analysis fit into one of the following four categories.

Descriptive analysis

Descriptive analysis identifies what has already happened . It is a common first step that companies carry out before proceeding with deeper explorations. As an example, let’s refer back to our fictional learning provider once more. TopNotch Learning might use descriptive analytics to analyze course completion rates for their customers. Or they might identify how many users access their products during a particular period. Perhaps they’ll use it to measure sales figures over the last five years. While the company might not draw firm conclusions from any of these insights, summarizing and describing the data will help them to determine how to proceed.

Learn more: What is descriptive analytics?

Diagnostic analysis

Diagnostic analytics focuses on understanding why something has happened . It is literally the diagnosis of a problem, just as a doctor uses a patient’s symptoms to diagnose a disease. Remember TopNotch Learning’s business problem? ‘Which factors are negatively impacting the customer experience?’ A diagnostic analysis would help answer this. For instance, it could help the company draw correlations between the issue (struggling to gain repeat business) and factors that might be causing it (e.g. project costs, speed of delivery, customer sector, etc.) Let’s imagine that, using diagnostic analytics, TopNotch realizes its clients in the retail sector are departing at a faster rate than other clients. This might suggest that they’re losing customers because they lack expertise in this sector. And that’s a useful insight!

Predictive analysis

Predictive analysis allows you to identify future trends based on historical data . In business, predictive analysis is commonly used to forecast future growth, for example. But it doesn’t stop there. Predictive analysis has grown increasingly sophisticated in recent years. The speedy evolution of machine learning allows organizations to make surprisingly accurate forecasts. Take the insurance industry. Insurance providers commonly use past data to predict which customer groups are more likely to get into accidents. As a result, they’ll hike up customer insurance premiums for those groups. Likewise, the retail industry often uses transaction data to predict where future trends lie, or to determine seasonal buying habits to inform their strategies. These are just a few simple examples, but the untapped potential of predictive analysis is pretty compelling.

Prescriptive analysis

Prescriptive analysis allows you to make recommendations for the future. This is the final step in the analytics part of the process. It’s also the most complex. This is because it incorporates aspects of all the other analyses we’ve described. A great example of prescriptive analytics is the algorithms that guide Google’s self-driving cars. Every second, these algorithms make countless decisions based on past and present data, ensuring a smooth, safe ride. Prescriptive analytics also helps companies decide on new products or areas of business to invest in.

Learn more: What are the different types of data analysis?

5. Step five: Sharing your results

You’ve finished carrying out your analyses. You have your insights. The final step of the data analytics process is to share these insights with the wider world (or at least with your organization’s stakeholders!) This is more complex than simply sharing the raw results of your work—it involves interpreting the outcomes, and presenting them in a manner that’s digestible for all types of audiences. Since you’ll often present information to decision-makers, it’s very important that the insights you present are 100% clear and unambiguous. For this reason, data analysts commonly use reports, dashboards, and interactive visualizations to support their findings.

How you interpret and present results will often influence the direction of a business. Depending on what you share, your organization might decide to restructure, to launch a high-risk product, or even to close an entire division. That’s why it’s very important to provide all the evidence that you’ve gathered, and not to cherry-pick data. Ensuring that you cover everything in a clear, concise way will prove that your conclusions are scientifically sound and based on the facts. On the flip side, it’s important to highlight any gaps in the data or to flag any insights that might be open to interpretation. Honest communication is the most important part of the process. It will help the business, while also helping you to excel at your job!

Tools for interpreting and sharing your findings

There are tons of data visualization tools available, suited to different experience levels. Popular tools requiring little or no coding skills include Google Charts , Tableau , Datawrapper , and Infogram . If you’re familiar with Python and R, there are also many data visualization libraries and packages available. For instance, check out the Python libraries Plotly , Seaborn , and Matplotlib . Whichever data visualization tools you use, make sure you polish up your presentation skills, too. Remember: Visualization is great, but communication is key!

You can learn more about storytelling with data in this free, hands-on tutorial . We show you how to craft a compelling narrative for a real dataset, resulting in a presentation to share with key stakeholders. This is an excellent insight into what it’s really like to work as a data analyst!

6. Step six: Embrace your failures

The last ‘step’ in the data analytics process is to embrace your failures. The path we’ve described above is more of an iterative process than a one-way street. Data analytics is inherently messy, and the process you follow will be different for every project. For instance, while cleaning data, you might spot patterns that spark a whole new set of questions. This could send you back to step one (to redefine your objective). Equally, an exploratory analysis might highlight a set of data points you’d never considered using before. Or maybe you find that the results of your core analyses are misleading or erroneous. This might be caused by mistakes in the data, or human error earlier in the process.

While these pitfalls can feel like failures, don’t be disheartened if they happen. Data analysis is inherently chaotic, and mistakes occur. What’s important is to hone your ability to spot and rectify errors. If data analytics was straightforward, it might be easier, but it certainly wouldn’t be as interesting. Use the steps we’ve outlined as a framework, stay open-minded, and be creative. If you lose your way, you can refer back to the process to keep yourself on track.

In this post, we’ve covered the main steps of the data analytics process. These core steps can be amended, re-ordered and re-used as you deem fit, but they underpin every data analyst’s work:

- Define the question —What business problem are you trying to solve? Frame it as a question to help you focus on finding a clear answer.

- Collect data —Create a strategy for collecting data. Which data sources are most likely to help you solve your business problem?

- Clean the data —Explore, scrub, tidy, de-dupe, and structure your data as needed. Do whatever you have to! But don’t rush…take your time!

- Analyze the data —Carry out various analyses to obtain insights. Focus on the four types of data analysis: descriptive, diagnostic, predictive, and prescriptive.

- Share your results —How best can you share your insights and recommendations? A combination of visualization tools and communication is key.

- Embrace your mistakes —Mistakes happen. Learn from them. This is what transforms a good data analyst into a great one.

What next? From here, we strongly encourage you to explore the topic on your own. Get creative with the steps in the data analysis process, and see what tools you can find. As long as you stick to the core principles we’ve described, you can create a tailored technique that works for you.

To learn more, check out our free, 5-day data analytics short course . You might also be interested in the following:

- These are the top 9 data analytics tools

- 10 great places to find free datasets for your next project

- How to build a data analytics portfolio

Data analysis write-ups

What should a data-analysis write-up look like.

Writing up the results of a data analysis is not a skill that anyone is born with. It requires practice and, at least in the beginning, a bit of guidance.

Organization

When writing your report, organization will set you free. A good outline is: 1) overview of the problem, 2) your data and modeling approach, 3) the results of your data analysis (plots, numbers, etc), and 4) your substantive conclusions.

1) Overview Describe the problem. What substantive question are you trying to address? This needn’t be long, but it should be clear.

2) Data and model What data did you use to address the question, and how did you do it? When describing your approach, be specific. For example:

- Don’t say, “I ran a regression” when you instead can say, “I fit a linear regression model to predict price that included a house’s size and neighborhood as predictors.”

- Justify important features of your modeling approach. For example: “Neighborhood was included as a categorical predictor in the model because Figure 2 indicated clear differences in price across the neighborhoods.”

Sometimes your Data and Model section will contain plots or tables, and sometimes it won’t. If you feel that a plot helps the reader understand the problem or data set itself—as opposed to your results—then go ahead and include it. A great example here is Tables 1 and 2 in the main paper on the PREDIMED study . These tables help the reader understand some important properties of the data and approach, but not the results of the study itself.

3) Results In your results section, include any figures and tables necessary to make your case. Label them (Figure 1, 2, etc), give them informative captions, and refer to them in the text by their numbered labels where you discuss them. Typical things to include here may include: pictures of the data; pictures and tables that show the fitted model; tables of model coefficients and summaries.

4) Conclusion What did you learn from the analysis? What is the answer, if any, to the question you set out to address?

General advice

Make the sections as short or long as they need to be. For example, a conclusions section is often pretty short, while a results section is usually a bit longer.

It’s OK to use the first person to avoid awkward or bizarre sentence constructions, but try to do so sparingly.

Do not include computer code unless explicitly called for. Note: model outputs do not count as computer code. Outputs should be used as evidence in your results section (ideally formatted in a nice way). By code, I mean the sequence of commands you used to process the data and produce the outputs.

When in doubt, use shorter words and sentences.

A very common way for reports to go wrong is when the writer simply narrates the thought process he or she followed: :First I did this, but it didn’t work. Then I did something else, and I found A, B, and C. I wasn’t really sure what to make of B, but C was interesting, so I followed up with D and E. Then having done this…” Do not do this. The desire for specificity is admirable, but the overall effect is one of amateurism. Follow the recommended outline above.

Here’s a good example of a write-up for an analysis of a few relatively simple problems. Because the problems are so straightforward, there’s not much of a need for an outline of the kind described above. Nonetheless, the spirit of these guidelines is clearly in evidence. Notice the clear exposition, the labeled figures and tables that are referred to in the text, and the careful integration of visual and numerical evidence into the overall argument. This is one worth emulating.

The Community

Modern analyst blog, community blog.

- Member Profiles

Networking Opportunities

Community spotlight, business analysis glossary, articles listing, business analyst humor, self assessment.

- Training Courses

- Organizations

- Resume Writing Tips

- Interview Questions

Let Us Help Your Business

Advertise with us, rss feeds & syndication, privacy policy.

Writing a Good Data Analysis Report: 7 Steps

As a data analyst, you feel most comfortable when you’re alone with all the numbers and data. You’re able to analyze them with confidence and reach the results you were asked to find. But, this is not the end of the road for you. You still need to write a data analysis report explaining your findings to the laymen - your clients or coworkers.

That means you need to think about your target audience, that is the people who’ll be reading your report.

They don’t have nearly as much knowledge about data analysis as you do. So, your report needs to be straightforward and informative. The article below will help you learn how to do it. Let’s take a look at some practical tips you can apply to your data analysis report writing and the benefits of doing so.

source: Pexels

Data Analysis Report Writing: 7 Steps

The process of writing a data analysis report is far from simple, but you can master it quickly, with the right guidance and examples of similar reports .

This is why we've prepared a step-by-step guide that will cover everything you need to know about this process, as simply as possible. Let’s get to it.

Consider Your Audience

You are writing your report for a certain target audience, and you need to keep them in mind while writing. Depending on their level of expertise, you’ll need to adjust your report and ensure it speaks to them. So, before you go any further, ask yourself:

Who will be reading this report? How well do they understand the subject?

Let’s say you’re explaining the methodology you used to reach your conclusions and find the data in question. If the reader isn’t familiar with these tools and software, you’ll have to simplify it for them and provide additional explanations.

So, you won't be writing the same type of report for a coworker who's been on your team for years or a client who's seeing data analysis for the first time. Based on this determining factor, you'll think about:

the language and vocabulary you’re using

abbreviations and level of technicality

the depth you’ll go into to explain something

the type of visuals you’ll add

Your readers’ expertise dictates the tone of your report and you need to consider it before writing even a single word.

Draft Out the Sections

The next thing you need to do is create a draft of your data analysis report. This is just a skeleton of what your report will be once you finish. But, you need a starting point.

So, think about the sections you'll include and what each section is going to cover. Typically, your report should be divided into the following sections:

Introduction

Body (Data, Methods, Analysis, Results)

For each section, write down several short bullet points regarding the content to cover. Below, we'll discuss each section more elaborately.

Develop The Body

The body of your report is the most important section. You need to organize it into subsections and present all the information your readers will be interested in.

We suggest the following subsections.

Explain what data you used to conduct your analysis. Be specific and explain how you gathered the data, what your sample was, what tools and resources you’ve used, and how you’ve organized your data. This will give the reader a deeper understanding of your data sample and make your report more solid.

Also, explain why you choose the specific data for your sample. For instance, you may say “ The sample only includes data of the customers acquired during 2021, in the peak of the pandemic.”

Next, you need to explain what methods you’ve used to analyze the data. This simply means you need to explain why and how you choose specific methods. You also need to explain why these methods are the best fit for the goals you’ve set and the results you’re trying to reach.

Back up your methodology section with background information on each method or tool used. Explain how these resources are typically used in data analysis.

After you've explained the data and methods you've used, this next section brings those two together. The analysis section shows how you've analyzed the specific data using the specific methods.

This means you’ll show your calculations, charts, and analyses, step by step. Add descriptions and explain each of the steps. Try making it as simple as possible so that even the most inexperienced of your readers understand every word.

This final section of the body can be considered the most important section of your report. Most of your clients will skim the rest of the report to reach this section.

Because it’ll answer the questions you’ve all raised. It shares the results that were reached and gives the reader new findings, facts, and evidence.

So, explain and describe the results using numbers. Then, add a written description of what each of the numbers stands for and what it means for the entire analysis. Summarize your results and finalize the report on a strong note.

Write the Introduction

Yes, it may seem strange to write the introduction section at the end, but it’s the smartest way to do it. This section briefly explains what the report will cover. That’s why you should write it after you’ve finished writing the Body.

In your introduction, explain:

the question you’ve raised and answered with the analysis

context of the analysis and background information

short outline of the report

Simply put, you’re telling your audience what to expect.

Add a Short Conclusion

Finally, the last section of your paper is a brief conclusion. It only repeats what you described in the Body, but only points out the most important details.

It should be less than a page long and use straightforward language to deliver the most important findings. It should also include a paragraph about the implications and importance of those findings for the client, customer, business, or company that hired you.

Include Data Visualization Elements

You have all the data and numbers in your mind and find it easy to understand what the data is saying. But, to a layman or someone less experienced than yourself, it can be quite a puzzle. All the information that your data analysis has found can create a mess in the head of your reader.

So, you should simplify it by using data visualization elements.

Firstly, let’s define what are the most common and useful data visualization elements you can use in your report:

There are subcategories to each of the elements and you should explore them all to decide what will do the best job for your specific case. For instance, you'll find different types of charts including, pie charts, bar charts, area charts, or spider charts.

For each data visualization element, add a brief description to tell the readers what information it contains. You can also add a title to each element and create a table of contents for visual elements only.

Proofread & Edit Before Submission

All the hard work you’ve invested in writing a good data analysis report might go to waste if you don’t edit and proofread. Proofreading and editing will help you eliminate potential mistakes, but also take another objective look at your report.

First, do the editing part. It includes:

reading the whole report objectively, like you’re seeing it for the first time

leaving an open mind for changes

adding or removing information

rearranging sections

finding better words to say something

You should repeat the editing phase a couple of times until you're completely happy with the result. Once you're certain the content is all tidied up, you can move on to the proofreading stage. It includes:

finding and removing grammar and spelling mistakes

rethinking vocabulary choices

improving clarity

improving readability

You can use an online proofreading tool to make things faster. If you really want professional help, Grab My Essay is a great choice. Their professional writers can edit and rewrite your entire report, to make sure it’s impeccable before submission.

Whatever you choose to do, proofread yourself or get some help with it, make sure your report is well-organized and completely error-free.

Benefits of Writing Well-Structured Data Analysis Reports

Yes, writing a good data analysis report is a lot of hard work. But, if you understand the benefits of writing it, you’ll be more motivated and willing to invest the time and effort. After knowing how it can help you in different segments of your professional journey, you’ll be more willing to learn how to do it.

Below are the main benefits a data analysis report brings to the table.

Improved Collaboration

When you’re writing a data analysis report, you need to be aware more than one end user is going to use it. Whether it’s your employer, customer, or coworker - you need to make sure they’re all on the same page. And when you write a data analysis report that is easy to understand and learn from, you’re creating a bridge between all these people.

Simply, all of them are given accurate data they can rely on and you’re thus removing the potential misunderstandings that can happen in communication. This improves the overall collaboration level and makes everyone more open and helpful.

Increased Efficiency

People who are reading your data analysis report need the information it contains for some reason. They might use it to do their part of the job, to make decisions, or report further to someone else. Either way, the better your report, the more efficient it'll be. And, if you rely on those people as well, you'll benefit from this increased productivity as well.

Data tells a story about a business, project, or venture. It's able to show how well you've performed, what turned out to be a great move, and what needs to be reimagined. This means that a data analysis report provides valuable insight and measurable KPIs (key performance indicators) that you’re able to use to grow and develop.

Clear Communication

Information is key regardless of the industry you're in or the type of business you're doing. Data analysis finds that information and proves its accuracy and importance. But, if those findings and the information itself aren't communicated clearly, it's like you haven't even found them.

This is why a data analysis report is crucial. It will present the information less technically and bring it closer to the readers.

Final Thoughts

As you can see, it takes some skill and a bit more practice to write a good data analysis report. But, all the effort you invest in writing it will be worth it once the results kick in. You’ll improve the communication between you and your clients, employers, or coworkers. People will be able to understand, rely on, and use the analysis you’ve conducted.

So, don’t be afraid and start writing your first data analysis report. Just follow the 7 steps we’ve listed and use a tool such as ProWebScraper to help you with website data analysis. You’ll be surprised when you see the result of your hard work.

Jessica Fender is a business analyst and a blogger. She writes about business and data analysis, networking in this sector, and acquiring new skills. Her goal is to provide fresh and accurate information that readers can apply instantly.

Related Articles

Article/Paper Categories

Upcoming live webinars, ace the interview.

Roles and Titles

- Business Analyst

- Business Process Analyst

- IT Business Analyst

- Requirements Engineer

- Business Systems Analyst

- Systems Analyst

- Data Analyst

Career Resources

- Interview Tips

- Salary Information

- Directory of Links

Community Resources

- Project Members

Advertising Opportunities | Contact Us | Privacy Policy

- Online Degree Explore Bachelor’s & Master’s degrees

- MasterTrack™ Earn credit towards a Master’s degree

- University Certificates Advance your career with graduate-level learning

- Top Courses

- Join for Free

What Is Data Analysis? (With Examples)

Data analysis is the practice of working with data to glean useful information, which can then be used to make informed decisions.

![how to write an analysis of data [Featured image] A female data analyst takes notes on her laptop at a standing desk in a modern office space](https://d3njjcbhbojbot.cloudfront.net/api/utilities/v1/imageproxy/https://images.ctfassets.net/wp1lcwdav1p1/2CUbULaq9mEfSSIq6lsCUu/b8ec58abf5106bf9bf75b17da09c39c0/What_is_data_analysis.png?w=1500&h=680&q=60&fit=fill&f=faces&fm=jpg&fl=progressive&auto=format%2Ccompress&dpr=1&w=1000)

"It is a capital mistake to theorize before one has data. Insensibly one begins to twist facts to suit theories, instead of theories to suit facts," Sherlock Holme's proclaims in Sir Arthur Conan Doyle's A Scandal in Bohemia.

This idea lies at the root of data analysis. When we can extract meaning from data, it empowers us to make better decisions. And we’re living in a time when we have more data than ever at our fingertips.

Companies are wisening up to the benefits of leveraging data. Data analysis can help a bank to personalize customer interactions, a health care system to predict future health needs, or an entertainment company to create the next big streaming hit.

The World Economic Forum Future of Jobs Report 2020 listed data analysts and scientists as the top emerging job, followed immediately by AI and machine learning specialists, and big data specialists [ 1 ]. In this article, you'll learn more about the data analysis process, different types of data analysis, and recommended courses to help you get started in this exciting field.

Read more: How to Become a Data Analyst (with or Without a Degree)

Data analysis process

As the data available to companies continues to grow both in amount and complexity, so too does the need for an effective and efficient process by which to harness the value of that data. The data analysis process typically moves through several iterative phases. Let’s take a closer look at each.

Identify the business question you’d like to answer. What problem is the company trying to solve? What do you need to measure, and how will you measure it?

Collect the raw data sets you’ll need to help you answer the identified question. Data collection might come from internal sources, like a company’s client relationship management (CRM) software, or from secondary sources, like government records or social media application programming interfaces (APIs).

Clean the data to prepare it for analysis. This often involves purging duplicate and anomalous data, reconciling inconsistencies, standardizing data structure and format, and dealing with white spaces and other syntax errors.

Analyze the data. By manipulating the data using various data analysis techniques and tools, you can begin to find trends, correlations, outliers, and variations that tell a story. During this stage, you might use data mining to discover patterns within databases or data visualization software to help transform data into an easy-to-understand graphical format.

Interpret the results of your analysis to see how well the data answered your original question. What recommendations can you make based on the data? What are the limitations to your conclusions?

Watch this video to hear what data analysis how Kevin, Director of Data Analytics at Google, defines data analysis.

Read more: What Does a Data Analyst Do? A Career Guide

Types of data analysis (with examples)

Data can be used to answer questions and support decisions in many different ways. To identify the best way to analyze your date, it can help to familiarize yourself with the four types of data analysis commonly used in the field.

In this section, we’ll take a look at each of these data analysis methods, along with an example of how each might be applied in the real world.

Descriptive analysis

Descriptive analysis tells us what happened. This type of analysis helps describe or summarize quantitative data by presenting statistics. For example, descriptive statistical analysis could show the distribution of sales across a group of employees and the average sales figure per employee.

Descriptive analysis answers the question, “what happened?”

Diagnostic analysis

If the descriptive analysis determines the “what,” diagnostic analysis determines the “why.” Let’s say a descriptive analysis shows an unusual influx of patients in a hospital. Drilling into the data further might reveal that many of these patients shared symptoms of a particular virus. This diagnostic analysis can help you determine that an infectious agent—the “why”—led to the influx of patients.

Diagnostic analysis answers the question, “why did it happen?”

Predictive analysis

So far, we’ve looked at types of analysis that examine and draw conclusions about the past. Predictive analytics uses data to form projections about the future. Using predictive analysis, you might notice that a given product has had its best sales during the months of September and October each year, leading you to predict a similar high point during the upcoming year.

Predictive analysis answers the question, “what might happen in the future?”

Prescriptive analysis

Prescriptive analysis takes all the insights gathered from the first three types of analysis and uses them to form recommendations for how a company should act. Using our previous example, this type of analysis might suggest a market plan to build on the success of the high sales months and harness new growth opportunities in the slower months.

Prescriptive analysis answers the question, “what should we do about it?”

This last type is where the concept of data-driven decision-making comes into play.

Read more : Advanced Analytics: Definition, Benefits, and Use Cases

What is data-driven decision-making (DDDM)?

Data-driven decision-making, sometimes abbreviated to DDDM), can be defined as the process of making strategic business decisions based on facts, data, and metrics instead of intuition, emotion, or observation.

This might sound obvious, but in practice, not all organizations are as data-driven as they could be. According to global management consulting firm McKinsey Global Institute, data-driven companies are better at acquiring new customers, maintaining customer loyalty, and achieving above-average profitability [ 2 ].

Get started with Coursera

If you’re interested in a career in the high-growth field of data analytics, consider these top-rated courses on Coursera:

Begin building job-ready skills with the Google Data Analytics Professional Certificate . Prepare for an entry-level job as you learn from Google employees—no experience or degree required.

Practice working with data with Macquarie University's Excel Skills for Business Specialization . Learn how to use Microsoft Excel to analyze data and make data-informed business decisions.

Deepen your skill set with Google's Advanced Data Analytics Professional Certificate . In this advanced program, you'll continue exploring the concepts introduced in the beginner-level courses, plus learn Python, statistics, and Machine Learning concepts.

Frequently asked questions (FAQ)

Where is data analytics used .

Just about any business or organization can use data analytics to help inform their decisions and boost their performance. Some of the most successful companies across a range of industries — from Amazon and Netflix to Starbucks and General Electric — integrate data into their business plans to improve their overall business performance.

What are the top skills for a data analyst?

Data analysis makes use of a range of analysis tools and technologies. Some of the top skills for data analysts include SQL, data visualization, statistical programming languages (like R and Python), machine learning, and spreadsheets.

Read : 7 In-Demand Data Analyst Skills to Get Hired in 2022

What is a data analyst job salary?

Data from Glassdoor indicates that the average base salary for a data analyst in the United States is $75,349 as of March 2024 [ 3 ]. How much you make will depend on factors like your qualifications, experience, and location.

Do data analysts need to be good at math?

Data analytics tends to be less math-intensive than data science. While you probably won’t need to master any advanced mathematics, a foundation in basic math and statistical analysis can help set you up for success.

Learn more: Data Analyst vs. Data Scientist: What’s the Difference?

Article sources

World Economic Forum. " The Future of Jobs Report 2020 , https://www.weforum.org/reports/the-future-of-jobs-report-2020." Accessed November 20, 2023.

McKinsey & Company. " Five facts: How customer analytics boosts corporate performance , https://www.mckinsey.com/business-functions/marketing-and-sales/our-insights/five-facts-how-customer-analytics-boosts-corporate-performance." Accessed November 20, 2023.

Glassdoor. " Data Analyst Salaries , https://www.glassdoor.com/Salaries/data-analyst-salary-SRCH_KO0,12.htm" Accessed November 20, 2023.

Keep reading

Coursera staff.

Editorial Team

Coursera’s editorial team is comprised of highly experienced professional editors, writers, and fact...

This content has been made available for informational purposes only. Learners are advised to conduct additional research to ensure that courses and other credentials pursued meet their personal, professional, and financial goals.

Learn / Guides / Data analysis for marketers

Back to guides

How to build a marketing data analysis report (template and examples)

Creating a data analysis report is an underrated yet critical skill for marketers. A marketing report can impact team, stakeholder, and company decisions, so highlighting (and omitting) the right information is crucial.

But how do you consistently churn out clear and compelling reports? This guide will help you tie your reports up in a neat little bow, ensuring alignment with and buy-in from your audience—and beneficial decisions for your end-users.

Last updated

Reading time.

Data analysis reporting is a process that combines quantitative and qualitative data to evaluate performance, share findings, and inform future decisions. One common example is reporting on the results of a marketing campaign, but there are plenty more reasons marketers might create a data analysis report.

More examples—plus the reporting steps, tools, and template—await below, so keep reading.

Get started fast with our free monthly data analysis report template , and follow these six key steps to creating a persuasive report:

Nail down the elements : provide a title, timeframe, and summary. Present data visually and close with action points.

Determine your purpose : figure out why you're making this report so you can focus on the right information

Identify your audience and their needs : define your readers and tailor your report to their requirements

Put your key insights first : maximize Hotjar, a multi-product data analytics platform, to track metrics and gather meaningful insights

Visualize your data : incorporate charts, heatmaps, and other visuals to convey facts and insights effectively

Ask your audience for feedback : collect your audience's feedback to continuously improve your reporting approach

How to write a data analysis report in 6 easy steps

Knowing how to write a data analysis report is vital—especially in a data-informed field like marketing. Organizing and visualizing your data helps you

Evaluate strategies and performance

Inform future decisions and actions

Share findings and recommendations to serve users better

However, even marketers dealing with data regularly may find the task time-consuming. But we can promise you one thing: it won’t feel that way once you have a repeatable process and fewer data analysis and visualization tools to work with.

1. Nail down the elements

This chapter of our data analysis guide focuses on creating an executive report. But regardless of the type of report (we'll touch on a few more of them later), most share the same building blocks:

Title: use a straightforward title to convey your report's intent. Call it as it is, whether it's your overall marketing performance or a multi-channel marketing campaign.

Timeframe: reporting intervals include daily, weekly, monthly, quarterly, and annually. Monthly data analysis reports work best for marketing teams, clients, and executives.

TL;DR: summarize your key objectives and findings, such as insights, issues, and recommendations, in an executive summary. This sets your audience's expectations and helps busy team members focus on what matters to them.

Body: your bar charts, graphs, tables, and heatmaps go here. Add visual evidence from your analysis that supports your conclusion to win buy-in from decision-makers.

Conclusion: backed by the data in the body, state your plan for making progress with your goals. For instance, say you need additional dollars to spend on social media advertising since it's shown a consistent return on investment (ROI).

Discover actionable data, enhance business decisions

Craft compelling marketing reports fast. Collect, analyze, and report on crucial insights using Hotjar’s multi-product platform to get stakeholder buy-in and team alignment.

2. Determine your purpose

What data should you incorporate in your report? The answer lies in the purpose of your data analysis report.

Consider this: what do you hope to achieve when you share the results of your analysis? Is it to show stakeholders how customers use your products so you can improve them? Or is it to enlighten your team about what customers like so you can tailor campaigns to each segment?

Pulling the relevant data becomes a breeze once you’ve locked in your report’s primary purpose.

Collect meaningful data in real time with Hotjar

Marketers conduct quantitative data analysis to answer questions like, “How many?” or “How often?” In other words: this type of analysis involves numerical data, such as traffic and conversions.

But numbers alone don't provide the whole picture—you need to uncover the why behind them. Why did traffic from France increase yesterday? Why do certain customers rage-click on a call-to-action (CTA) button on your Demo page?

It's qualitative data that reveals the reasons customers behave a certain way. This non-numerical data includes behavioral observations, interview clips, and survey responses.

In Hotjar, an analytics platform for user behavior and digital experience insights, you can gather qualitative data via Surveys , Feedback, and Interviews , plus visualize quantitative data via Heatmaps and capture user behavior in real-time with Recordings, all from a single platform.

Here, it's easier than ever to collect and analyze quantitative and qualitative data, and build a user-centric marketing report based on your analysis.

Complement quantitative data with qualitative insights

3. Identify your audience

Always determine two audience types for your report: primary and secondary.

Company executives, clients (for marketing agencies), team members, and cross-functional collaborators, such as product managers, can fit into either category, depending on your report. Once you've categorized your primary and secondary audiences, it's easier to customize the report to their needs. Here are a few tips on how to do it:

Speak their language: in any business setting, this means striking a balance between not too formal and not too casual—think business in the front, party in the back

Discuss results, not methodologies: immediately dive into the insights gleaned from your quantitative and qualitative data analysis

Present key takeaways in the summary: as we said earlier, highlight your main points at the top of your report so the reader can instantly note what they think is interesting

Place eye-catching visuals in the body: your audience may skim through and search only for additional details in the body, so ensure your data visualization is easy to interpret

📋 Need a hand? Try using a template

If you’re unsure how to design your report or prefer not to build from scratch each time you run it, use a data analysis report template. Ensure it’s a well-crafted one aimed at showing—instead of telling—your audience what works and what doesn’t.

Aside from making you look good (😎), an excellent template saves you time, and gives your readers something to rely on during each reporting period.

So, what would an extremely occupied marketer do? Streamline the creation process, of course! Plug, play, and present your insights with our free monthly data analysis report template to get started 📈.

Click the link above to make a copy of our handy template

4. Prioritize key insights

Here comes the exciting part: assembling the data to draw a clear picture for your audience. Before you discovered this guide, you might have gathered data manually from various sources, such as Google Analytics, your preferred A/B testing platform, and even Hotjar.

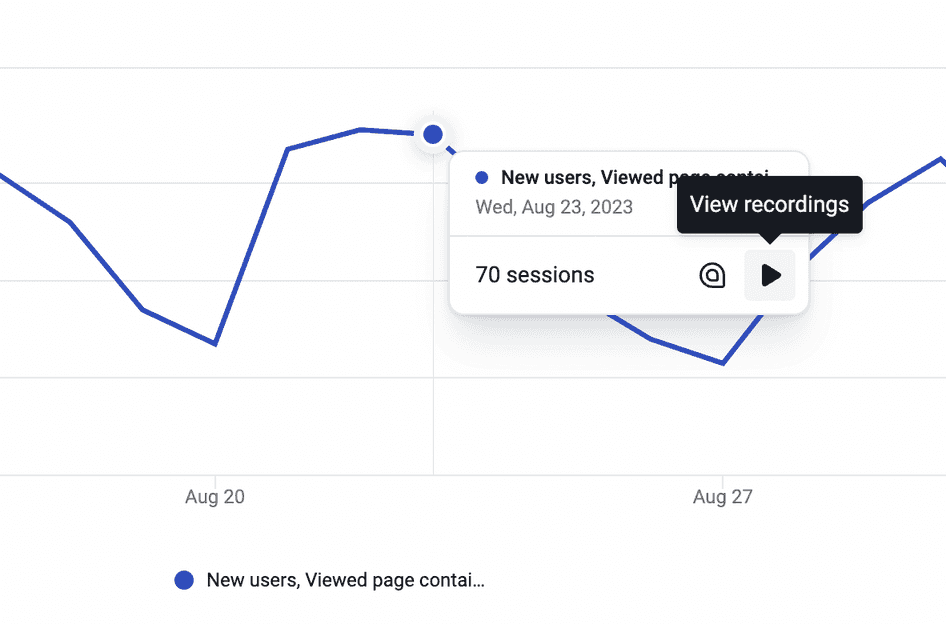

Luckily for you, there's a faster way to track and spot patterns occurring in your custom metrics. Hotjar Trends enables you to find the behavioral data you need, such as visitors who viewed any of the two landing page variations you were A/B testing, or users who rage clicked (a sign of frustration) before exiting your checkout page.

Compare your A/B test participants or new and returning users to see if one segment encountered any issues. Or view your rage clicks over time to understand what makes users frustrated. Then, click the ‘play’ icon to dive straight into your session recordings and discover the reason behind their actions.

Go from quantitative to qualitative data in a single click with Hotjar Trends

Finally, screencap the charts in Trends and link to some relevant recordings in your trend report .

A sample trend report showing rage clicks over time, created via Hotjar Trends

What else can you do within Hotjar? Use Highlights and Collections to group snippets of recordings and heatmaps to support your conclusion. Add these meaningful insights to your monthly report or share them with teammates on an ordinary day, without having to switch between multiple analytics tools.

Trends, Highlights, Recordings…will my audience remember all these names?

They may even start looking for them in every report from now on. Our tools and features are self-explanatory, so everyone, from the marketing and sales teams to clients and C-suite execs, can quickly understand them.

Personally I love using Highlights and Collections together. I see each highlight as a self-contained explanation for the case I’m making to the product team. I’ve got the video. I’ve got the tags if I need them. I’ll add comments and a thread for a response.

There’s no risk of distraction and minimal lift to get my stakeholders directly to the central issue.

And then the Collections view basically creates a visual report of all the evidence I have for a given case. Here's how much this hurts (one Highlight); click on the rest of these if you can stomach it (a Collection of Highlights).

5. Incorporate visual data

Whether you're comparing past and present conversion rates or sharing multiple data sets, it's crucial to get your point across quickly. After all, you're not the only team or department vying for your audience's attention. This is where data visualization plays a considerable role: maps and charts allow you to effectively convey your message by making your data interactive, digestible, and enjoyable.

To visualize data, use your spreadsheet of choice (for example, Excel or Google Sheets) or a dedicated platform like Tableau . You can also screenshot your data in the Hotjar Dashboard to save time and effort. Showcase relevant heatmaps, recordings, survey responses, interview snapshots, and direct feedback to drive your point home and get everyone on the same page.

6. Collect feedback from your audience

Just as Surveys and Feedback let you connect with actual customers, they also prove valuable in asking your audience’s thoughts once you’ve sent out your reports. By building a custom survey—for free—in Hotjar, you can include and analyze open-ended questions like, “How did this data analysis report help you?” and “What would you want to see in the next report?”

This enables stakeholders to give you proper feedback, especially if they didn't get the chance to speak after your presentation.

4 examples of data analysis reports

Now, we’re tackling four popular types of data analysis reports. Practice makes permanent, so let’s go over the ones you’ll likely produce regularly (you'll ace them in no time).

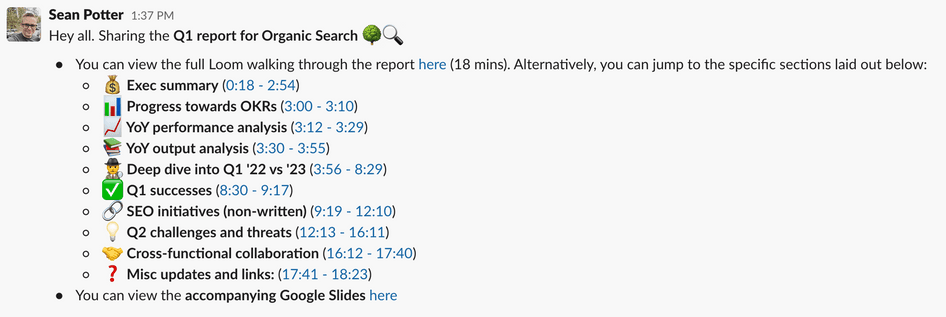

Executive report or digital marketing report

This comprehensive report combines vital insights into your marketing efforts across various channels. It tracks metrics like advertising cost, conversion rate, customer acquisition cost, and online revenue.

Remember: you'll send this document out to company executives who want to see how marketing directly contributes to the bottom line. Be sure to connect your efforts to revenue. And what better way to demonstrate your impact than showing heatmaps or recordings of people responding positively to your campaigns?

Search engine optimization (SEO) report

While an executive report may contain an SEO performance overview, this specialized report breaks down organic traffic in detail. Show your keyword rankings, conversion rates, and top traffic channels to explain your strategy to executives and stakeholders.

Note that you can track these essential metrics in Hotjar's Dashboard. Screenshot your customized dashboard or share it live with your audience as you discuss key insights. Pull up a recording or two or highlight customer feedback to strengthen your case.

For example, if session recordings reveal an unclear CTA has caused conversions for several landing pages to decline, you might recommend changing the CTA by running an A/B test and going with the winning variation.

Social media marketing report

This data analysis report example unpacks multiple channels. Which ones are helping you spread brand awareness and enhance customer loyalty? As such, you should track social key performance indicators (KPIs) like new followers, total reach, share of voice (SOV), engagement, and website referrals.

You can access relevant data and insights in your social media pages’ in-app analytics or analytics tools like Buffer and Hootsuite .

Marketing funnel report

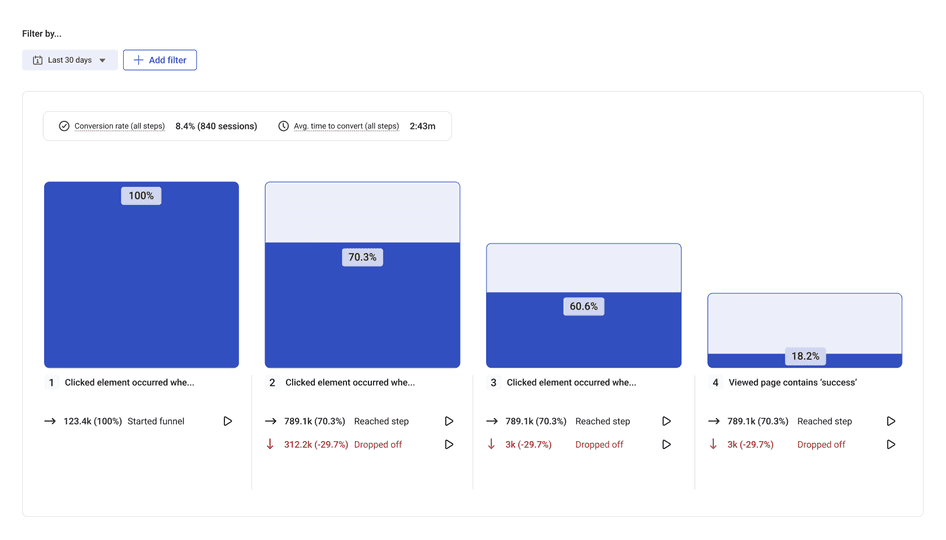

Conversion funnels allow you to home in on the few steps users take from first contact to final conversion. Here’s a basic conversion path if you’re marketing an ecommerce brand:

Homepage > category page > product page > cart > checkout > thank you page

Initially, you’ll look at how many people visit your main pages and who they are. Take your data analysis further with Hotjar Funnels , where you can measure conversion and drop-off rates. Add filters like traffic channels and user attributes to compare performance. To deepen your insights, jump into relevant recordings and see what causes users to leave before they convert.

The best part? You can gradually collect data, conduct funnel analysis , and integrate your findings into your report with in Hotjar and with out manually handling data: no hassle, no fuss.

Create data analysis reports that drive action

Ensure your team, stakeholders, and executives make data-informed decisions regarding your marketing campaigns and strategies with compelling data analysis reports.

Needle-moving, user-centric insights deserve the spotlight. Start with our free template and populate it with numerical and non-numerical data from Hotjar and other sources. Go ahead and tell a visual story that inspires action today.

FAQs about data analysis reports

What is a data analysis report.